Llama Without SaaS Tax.

Run Llama on your infrastructure. Zero data leaves your network. GPTfy adds masking and audit trails on top.

95%of enterprises say sovereign AI will be mission-critical within 3 years, but only 13% have achieved it (EDB, 2025)

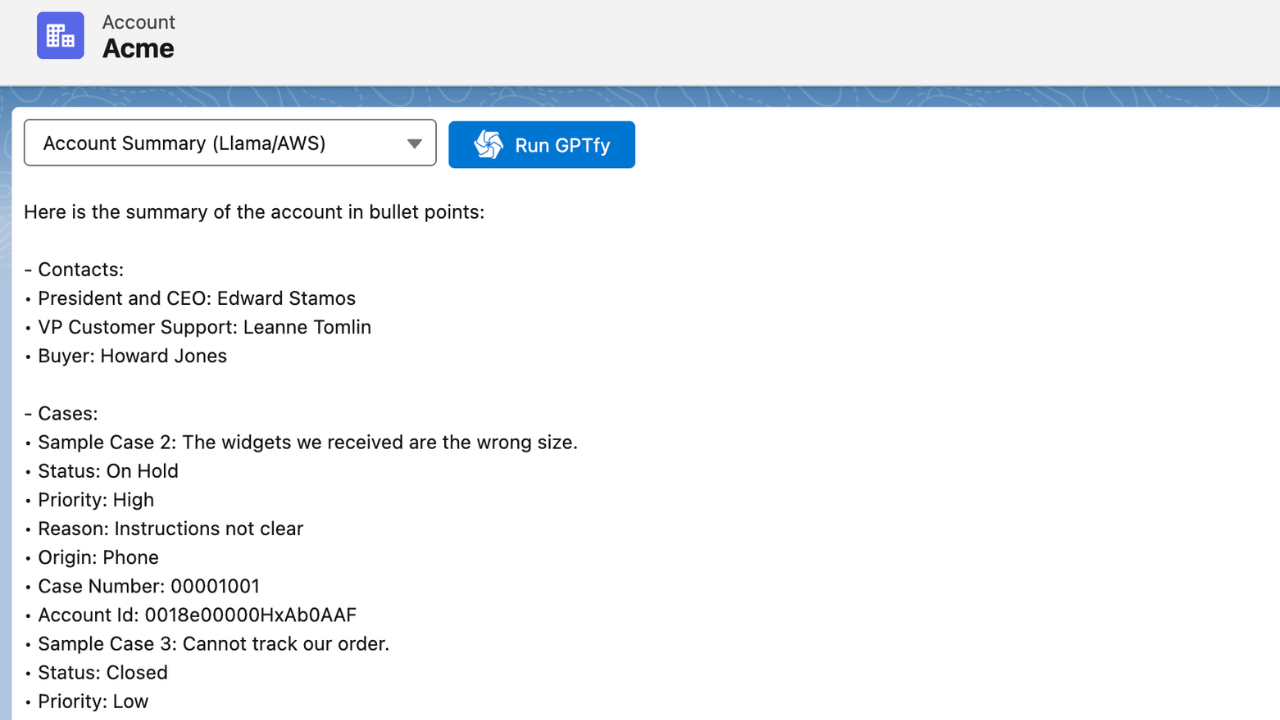

See self-hosted Llama run inside Salesforce with zero data leaving your network

Your Llama instance, your infrastructure, your Salesforce data — all connected through GPTfy Named Credentials.

Compliance Said No External APIs.

Regulated orgs cannot send CRM data to third-party AI. Self-hosted is the only path forward.

Every external API is a risk

Commercial AI APIs require sending Salesforce data to infrastructure you don't control. For healthcare, financial services, and government, this blocks AI adoption entirely.

“I don't want to send accounts to a separate system.”

CTO, Financial Services

Secure this with Zero-Trust ArchitectureProprietary APIs with no exit

Building on a single vendor's proprietary API means switching costs compound over time. Pricing increases and terms changes happen on their schedule.

“I don't know at what stage Salesforce becomes financially not acceptable.”

CTO, Financial Services

Secure this with Data MaskingRegulators want proof, not promises

HIPAA, FINRA, and FedRAMP require demonstrable data controls. 'Our vendor promises not to store data' is not evidence. Self-hosted infrastructure with audit trails is.

“Business users can work on the platform - not a dev-heavy environment.”

CTO, Financial Services

Secure this with Audit TrailsEvaluating the cost of switching models?

See cost savings vs BYOMSelf-Hosted Deployment, Your Infrastructure, Your Rules

Connect Any Llama Endpoint to Salesforce

GPTfy connects to your Llama instance via its OpenAI-compatible API. Run Llama on AWS SageMaker, Azure ML, GCP Vertex AI, or bare metal. GPTfy sends data through a Named Credential. See the Llama in Salesforce demo .

Full Model Control

Choose Llama 3.3, 3.1, or fine-tuned variants. Set inference parameters from the AI Connection record. Switch endpoints or model versions without code changes.

Data Sovereignty, Nothing Leaves Your Network

Complete Data Isolation

The entire AI pipeline stays inside your network. Salesforce sends data to your endpoint, Llama processes it, the response returns. No third party sees your data. Learn more about GPTfy's zero-trust architecture .

PII Masking as Defense in Depth

Even with self-hosted deployment, GPTfy's Security Layer masks PII before the callout. If logs capture request payloads or other systems access your endpoint, PII remains masked. Belt and suspenders for regulated industries.

Regulated Industry Compliance, Solved by Architecture

HIPAA, FINRA, FedRAMP Compatibility

Healthcare, financial services, and government cannot send data to external AI APIs. Self-hosted Llama solves this architecturally. Review GPTfy's compliance certifications for HIPAA, SOC 2, and GDPR details.

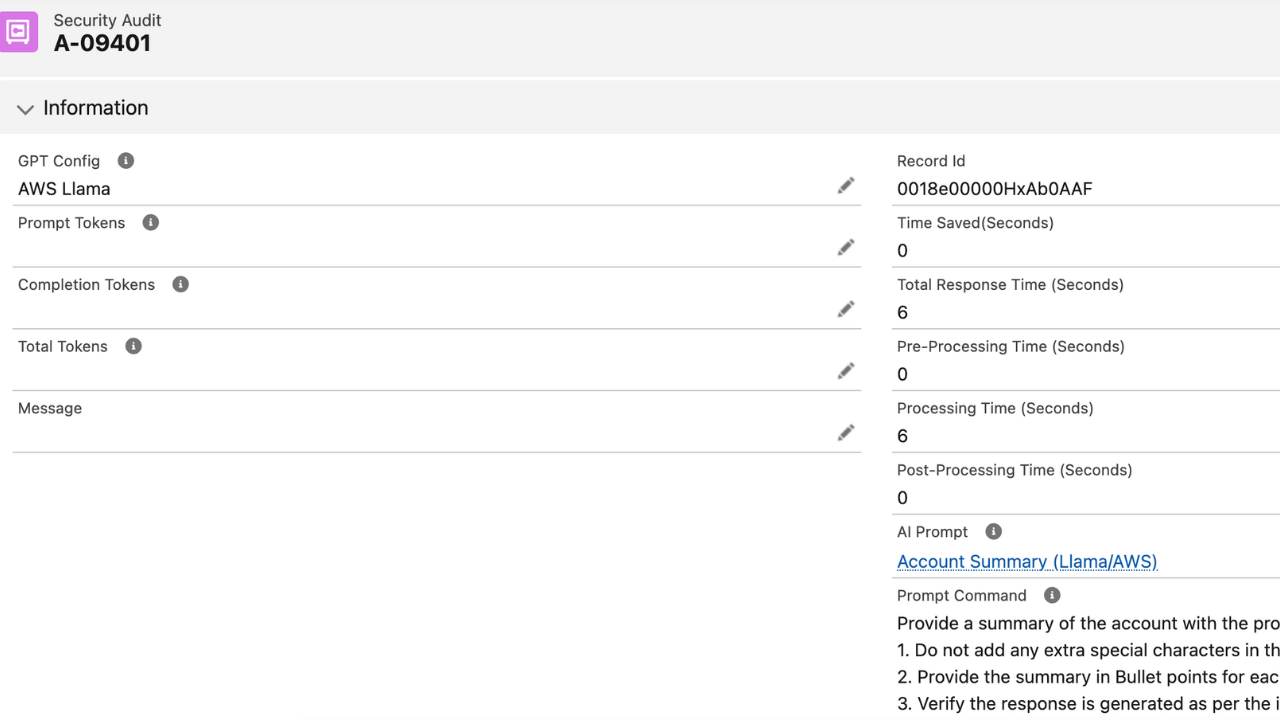

Auditable AI Operations

Every Llama request creates an AI Response record : masked prompt, response, token count, timestamp. Compliance can demonstrate exactly what was processed and what safeguards were active.

Why Choose Llama Integration

Your Infrastructure, Zero Third-Party Access

Llama runs on your servers. Salesforce connects to your endpoint. No data reaches Meta, OpenAI, or any external provider. The strongest data sovereignty posture available.

Defense-in-Depth PII Masking

GPTfy masks PII even for self-hosted models. If internal logs capture request payloads or other systems access your endpoint, sensitive data stays protected.

Regulated Industry Ready

HIPAA, FINRA, FedRAMP compliance solved by architecture. Data never leaves your infrastructure. AI Response audit trail satisfies regulatory evidence requirements.

Powerful Capabilities

Self-Hosted Deployment

Connect Llama on AWS SageMaker, Azure ML, GCP Vertex AI, or bare metal. GPTfy sends data through Named Credentials with no third-party infrastructure involved.

Zero Third-Party Access

Your Llama endpoint URL lives in a Salesforce Named Credential. Data flows from Salesforce to your servers and back. No external AI provider sees your data.

Defense-in-Depth Masking

GPTfy's Security Layer masks PII before every callout, even to self-hosted endpoints. If logs capture payloads or other systems access your instance, data stays protected.

AI Response Audit Trail

Every Llama request creates an AI Response record: masked prompt, response, token count, and timestamp. Query with SOQL or reports for compliance evidence.

Key Takeaways

- Llama runs on your own infrastructure. AWS SageMaker, Azure ML, GCP Vertex AI, or bare metal, with zero third-party data access.

- GPTfy connects to your self-hosted Llama endpoint via its OpenAI-compatible API through a Salesforce Named Credential.

- The entire AI pipeline stays inside your network: Salesforce sends data to your endpoint, Llama processes it, response returns.

- GPTfy's Security Layer masks PII before every callout even for self-hosted endpoints; defense in depth for internal logs.

- Supports Llama 3.3, 3.1, and fine-tuned variants; update the model field on the AI Connection record to adopt new versions.

- Every Llama request creates an AI_Response__c record with masked prompt, response, and token count for compliance evidence.

Frequently Asked Questions

GPTfy connects to any Llama endpoint that exposes an OpenAI-compatible API (which most hosting solutions provide). You configure your endpoint URL in a Salesforce Named Credential and reference it in a GPTfy AI Connection record. GPTfy sends Salesforce data to your endpoint through standard HTTPS callouts. The endpoint can run on AWS SageMaker, Azure ML, GCP Vertex AI, or any server with a compatible API.

Defense in depth. Even with self-hosted deployment, request payloads may appear in application logs, monitoring systems, or other internal tools that access your Llama endpoint. PII masking ensures that sensitive data is protected at every layer, not just at the network boundary. It also satisfies compliance auditors who require data minimization regardless of hosting location.

GPTfy works with any Llama model that exposes an OpenAI-compatible API endpoint. This includes Llama 3.3, Llama 3.1, Llama 3, and fine-tuned variants. You specify the model identifier on the AI Connection record. As Meta releases new versions, you can adopt them by updating your deployment and the model field on your AI Connection record.

Self-hosted Llama means your data never leaves your infrastructure because the model runs inside your network perimeter. For HIPAA (healthcare), FINRA (financial), and FedRAMP (government) compliance, this eliminates the data residency and third-party access concerns that block AI adoption. Combined with GPTfy's PII masking and AI Response audit trail, you can demonstrate to regulators exactly what data was processed and what safeguards were in place.

Self-hosted Llama eliminates per-token API costs. You pay for compute infrastructure (which you may already have) but avoid per-request charges that scale with usage. For high-volume Salesforce use cases (batch Account analysis, automated Case processing, Opportunity scoring across thousands of records), self-hosted Llama can reduce AI costs significantly compared to commercial API pricing.

Yes. Since GPTfy uses the same Named Credential pattern for all model integrations, you can switch from a self-hosted Llama endpoint to OpenAI, Claude, or Gemini by changing the endpoint URL and AI model identifier on your AI Connection record. Your prompts, Salesforce configurations, and audit trails remain intact.

See Self-Hosted AI Connected to Salesforce

30-minute demo. Self-hosted Llama endpoint, PII masking, audit trail, and zero data leaving your network.

Explore More Features

DeepSeek R1

Chain-of-thought reasoning at 5-10x lower cost.

OpenAI in Salesforce

GPT-5.2 with function calling for structured outputs.

Zero-Trust Architecture

Enterprise AI security with zero external data exposure.

Data Masking

Defense-in-depth PII protection even for self-hosted models.

Audit Trails

Compliance evidence for every AI interaction.

What Is BYOM?

Guide to Bring Your Own Model architecture for Salesforce AI.

GPTfy vs Einstein

Open BYOM architecture vs Einstein Trust Layer — see the side-by-side breakdown.