R1's Reasoning at 1/10th GPT-4o Cost.

Route heavy reasoning to DeepSeek R1, lighter tasks to faster models. Same masking, same audit trails.

37%of enterprises now use five or more AI models in production, reflecting demand for model flexibility (TypeDef AI, 2025)

See DeepSeek R1 running inside Salesforce at a fraction of GPT-4o cost

Reasoning-class AI in your Salesforce org — without OpenAI pricing. GPTfy connects DeepSeek through Named Credentials.

AI Budget Approved. ROI Still Missing.

Frontier-model pricing makes batch processing uneconomical. Reasoning stays opaque. Finance wants answers.

Per-token pricing scales unpredictably

Thousands of records daily at frontier pricing. Batch Account analysis, Case triage, and Opportunity scoring become line items finance questions every quarter.

“I don't know at what stage Salesforce becomes financially not acceptable”

- CTO, Financial Services

Connect your model via Bring Your Own ModelThe model says X but can't show why

When AI recommends dropping a deal or escalating a case, your team needs the reasoning chain. Conclusions without evidence create trust problems with compliance.

“I'm a firm believer of deploying pilots and seeing the value than just looking at presentations.”

- CTO, Financial Services

Secure this with Audit TrailsPaying premium for simple tasks

Using a frontier model for email summarization and complex deal coaching costs the same per token. Without multi-model routing, you pay reasoning prices for classification tasks.

“Why should that cost you a couple of million dollars?”

- IT Leader, Manufacturing/CPQ

Secure this with Security LayerEvaluating the cost of switching models?

See cost savings vs BYOMChain-of-Thought Reasoning, Visible in Your Audit Trail

Transparent Reasoning Steps

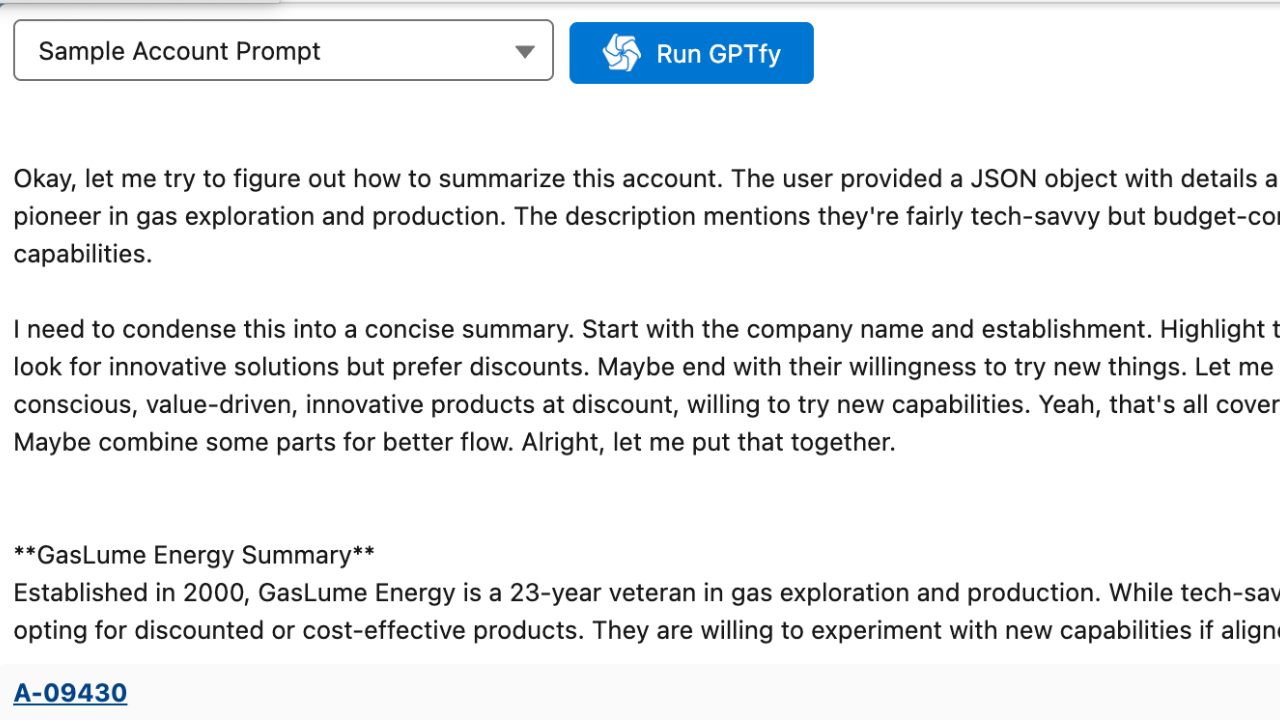

(DeepSeek R1 shows its reasoning chain before the final answer. GPTfy captures both the chain-of-thought and the response in the AI Response record . Your team sees how the model arrived at its analysis.)

Complex Salesforce Analysis

(Chain-of-thought excels at multi-step tasks: calculating pipeline weighted values, identifying renewal risk across related objects, synthesizing Case escalation patterns. See the DeepSeek demo .)

Cost Optimization, 5-10x Lower Than GPT-4o

Per-Token Cost Advantage

DeepSeek R1 delivers reasoning-class performance at 5-10x lower per-token cost. For high-volume Salesforce use cases (batch Account analysis, automated Case triage, Opportunity scoring), the savings compound significantly.

Multi-Model Routing Through GPTfy

(Use DeepSeek R1 for reasoning-heavy tasks and switch to GPT-4o-mini or Gemini Flash for simpler tasks. GPTfy's AI Connection records route each use case to the most cost-effective model. No Apex changes.)

Same Security Layer, Regardless of Model

PII Masking on Every DeepSeek Request

(GPTfy's Security Layer masks PII using the same configurable regex patterns whether you send data to DeepSeek, OpenAI, or Claude. Names, SSNs, emails, and phone numbers are masked in Apex before the callout.)

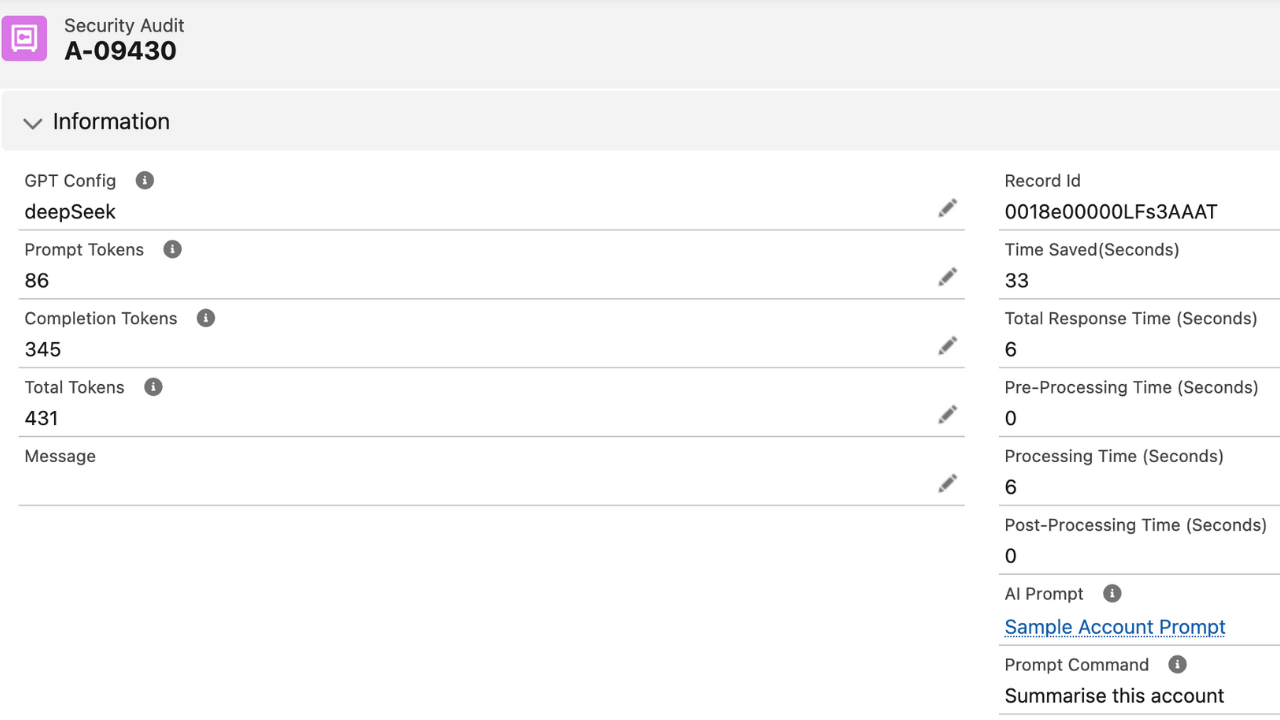

Named Credential + Audit Trail

(Your DeepSeek API key is stored in a Salesforce Named Credential, encrypted at rest. Every request creates an AI Response record with the masked prompt, response, token count, cost, and reasoning steps. Learn about GPTfy's zero-trust architecture .)

Why Choose DeepSeek R1

5-10x Lower Cost

DeepSeek R1 delivers reasoning-class analysis at a fraction of GPT-4o pricing. For high-volume Salesforce use cases, the per-token savings compound into significant budget reduction.

Chain-of-Thought Reasoning

See the model's reasoning steps, not just the conclusion. GPTfy captures the full chain-of-thought in the AI Response record for transparency and auditability.

Multi-Model Routing

Route reasoning-heavy tasks to DeepSeek and simpler tasks to lighter models. GPTfy's AI Connection records let you assign different models per prompt template without code changes.

Powerful Capabilities

Chain-of-Thought Reasoning

DeepSeek R1 exposes its step-by-step reasoning chain. GPTfy captures both the thinking process and final answer in the AI Response record for full auditability.

5-10x Cost Reduction

Reasoning-class performance at a fraction of GPT-4o per-token cost. GPTfy tracks token usage per request so you can measure exact savings across use cases.

Multi-Model Routing

Assign DeepSeek R1 for complex analysis and lighter models for simple tasks through AI Connection records. Each prompt template specifies its own model.

AI Response Audit Trail

Every DeepSeek request creates an AI Response record with the masked prompt, reasoning chain, final response, token count, and cost for compliance.

Key Takeaways

- DeepSeek R1 delivers chain-of-thought reasoning at 5-10x lower per-token cost than GPT-4o for Salesforce batch analysis.

- GPTfy captures both the reasoning chain and the final answer in the AI_Response__c record for full auditability.

- DeepSeek API key is stored in a Salesforce Named Credential; encrypted at rest, excluded from metadata deployments.

- GPTfy's Security Layer applies identical PII masking to DeepSeek requests as to any other connected AI model.

- Multi-model routing via AI Connection records lets you assign DeepSeek R1 to reasoning-heavy prompts and lighter models to simpler tasks.

- GPTfy tracks per-request token counts and costs so you can measure exact savings from routing tasks to DeepSeek.

Frequently Asked Questions

DeepSeek R1 costs approximately 5-10x less per token than GPT-4o while delivering comparable reasoning performance. For a batch job analyzing 500 Accounts, this difference can reduce AI costs from hundreds of dollars to tens of dollars per run. GPTfy's AI Response records track exact token counts and costs per request for precise budget management.

Chain-of-thought reasoning means the model shows its step-by-step logic before generating the final answer. For Salesforce analysis, this means you can see how the model calculated pipeline risk, identified renewal patterns, or prioritized Case severity. GPTfy captures both the reasoning chain and final response in the AI Response record for full transparency.

Yes. GPTfy's AI Connection records let you assign different models to different prompt templates. Use DeepSeek R1 for reasoning-heavy tasks like Account 360 analysis and pipeline scoring, and GPT-4o-mini for simpler tasks like email summarization. Each prompt template specifies its own AI Connection, so model routing happens automatically.

No. GPTfy's Security Layer applies identical PII masking, Named Credential authentication, and AI Response audit logging regardless of which model you use. The same regex patterns mask the same data types. The same Named Credential framework stores the API key. The same AI Response object logs every request.

Deployment takes under 60 minutes. Install the GPTfy managed package, create a Named Credential with your DeepSeek API key, and configure an AI Connection record specifying the R1 model. No custom Apex code is required. If you already have GPTfy installed with another model, adding DeepSeek is a single new AI Connection record. The Security Layer, audit logging, and prompt templates work immediately.

Yes. GPTfy supports batch Apex execution and scheduled flows that process thousands of records through DeepSeek R1. Because R1's per-token cost is 5-10x lower than frontier models, high-volume use cases like nightly Account health scoring, weekly pipeline analysis, and daily Case triage become economically viable at scale. GPTfy's AI Response records track token usage and cost per batch run for budget visibility.

See Chain-of-Thought Reasoning on Your Salesforce Data

30-minute demo. DeepSeek R1 analyzing live Salesforce records with visible reasoning steps, cost comparison, and multi-model routing.

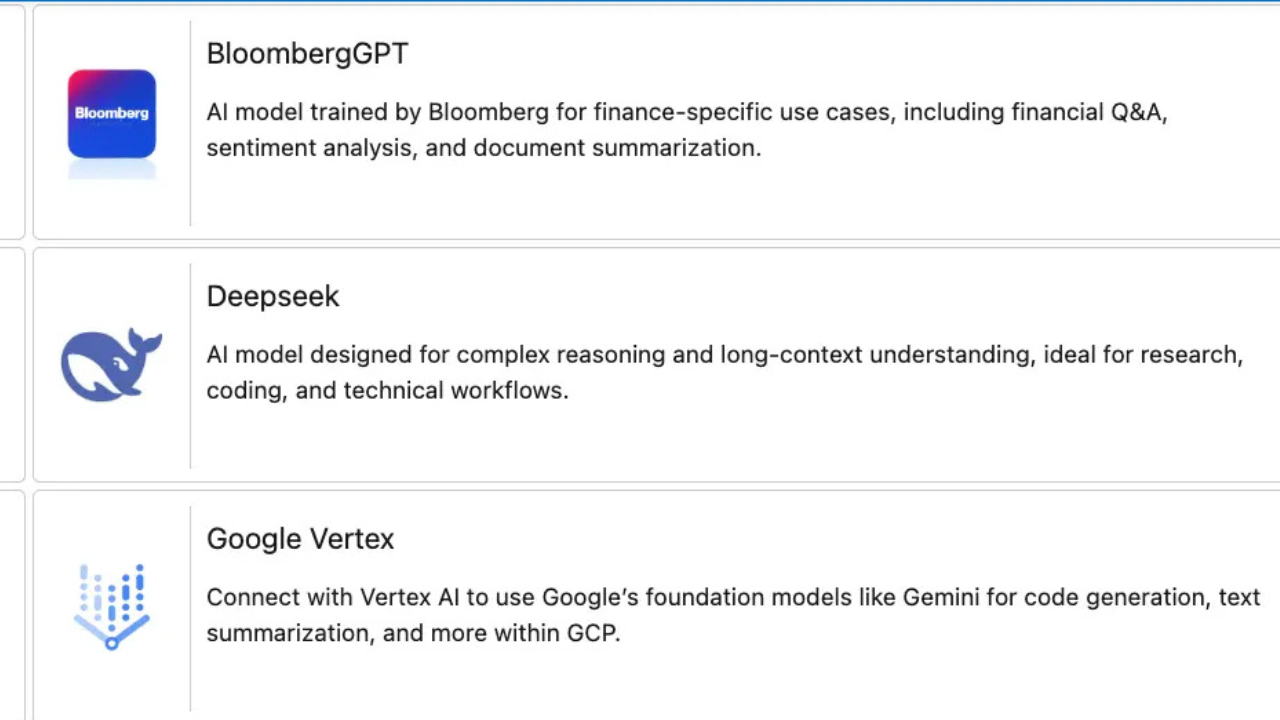

Explore More Features

OpenAI in Salesforce

GPT-5.2 with function calling for structured outputs.

Claude in Salesforce

200K context window for complete history analysis.

Llama (Self-Hosted)

Zero third-party data access on your infrastructure.

Prompt Builder

Configure prompts and route to cost-effective models.

Security Layer

PII masking, Named Credentials, and audit trails.

What Is BYOM?

Guide to Bring Your Own Model architecture for Salesforce AI.

GPTfy vs Einstein

Open BYOM architecture vs Einstein Trust Layer — see the side-by-side breakdown.