Account 360 Without Silent Data Loss.

200K context window sends complete Account histories, multi-year case threads, and full Opportunity timelines. No truncation.

95%of generative AI pilots fail; incomplete and truncated data context is the leading root cause (MIT, The GenAI Divide: State of AI in Business 2025)

See Claude's 200K context window working inside your Salesforce org

Long documents, complex contracts, extended case history — Claude reads all of it from your Salesforce records. 2-minute demo.

Your AI Silently Dropped Half the Data.

4K-32K context windows truncate Account history. The analysis looks complete. It isn't.

Older records silently dropped

Models with small context windows cut older Opportunities, Cases, and email threads. Your Account 360 looks complete but critical history is missing.

“Liked the easy and click/no-code way to configure GPT LLMs on any Salesforce object and go-live in days.”

- Gurditta Garg, Chief Salesforce Evangelist, Motorola

Secure this with Data MaskingNo warning, no error

No error message. No warning. A renewal risk assessment that excluded 18 months of Case history isn't incomplete; it's misleading.

“The implementation was smooth and the results exceeded expectations.”

- Rishi Golyan, Salesforce Consultant, Algocirrus

Secure this with Audit TrailsCorrelations lost across calls

Splitting data across multiple API calls means the model cannot correlate an Opportunity stall with a Case escalation from 8 months ago.

“GPTfy accurately understands user input and generates high-quality content in the right format.”

- Ankita Dhamgaya, Director and Founder, AlgoCirrus

Secure this with Security LayerEvaluating the cost of switching models?

See cost savings vs BYOM200K Context Window, Every Related Record Included

Full Record Context Without Truncation

(Claude's 200K token window lets GPTfy send the complete Account record: every Opportunity (including closed-lost), every Case with full comment history, every Contact with activity logs. No silent truncation. Compare this with OpenAI's function-calling strengths for different use cases.)

Cross-Object Intelligence

Claude sees patterns truncated models miss: a Case escalation that correlates with renewal risk, an Opportunity stall matching a support ticket from 8 months ago, a Contact unresponsive since a product incident.

PII Masking at Scale, 200K Tokens Fully Covered

Pattern-Based Masking on Large Payloads

(GPTfy's Security Layer masks PII across the entire 200K token payload before any data reaches Anthropic. Names, SSNs, emails, phone numbers, and custom patterns are replaced in Apex before the callout. Larger payloads make automated masking essential.)

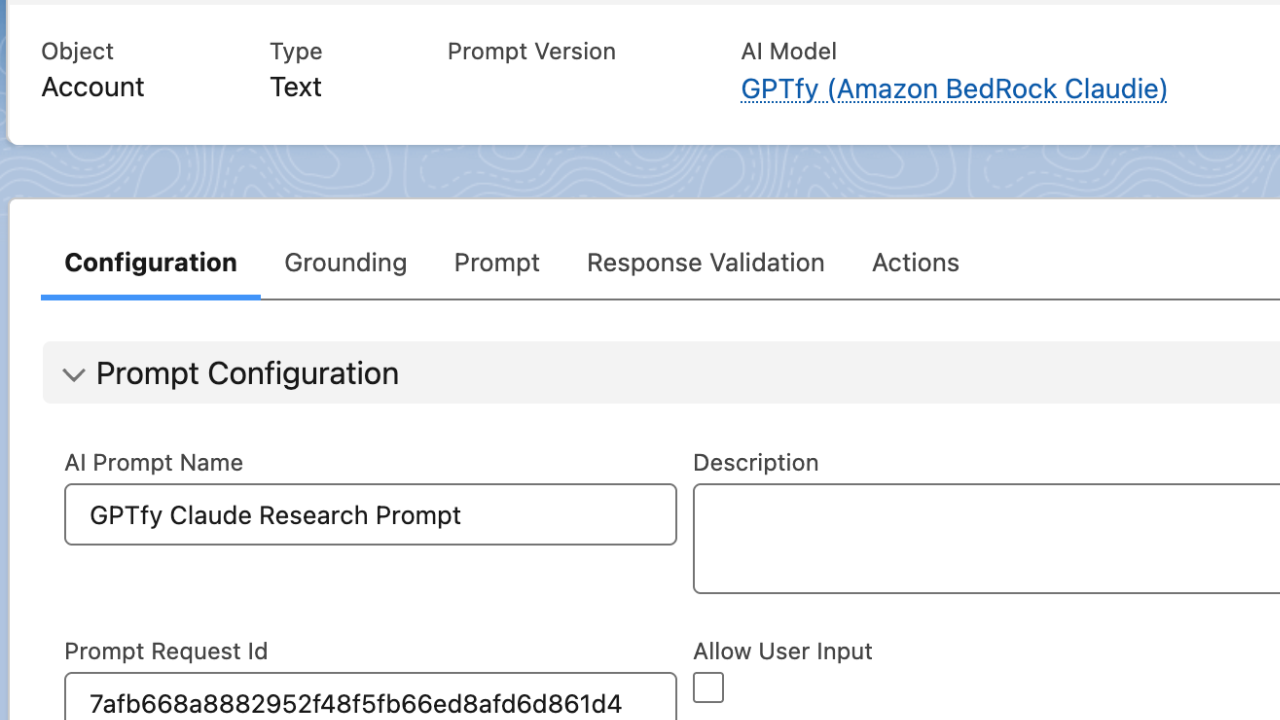

Named Credential Authentication

(Your Anthropic API key lives in a Salesforce Named Credential, encrypted at rest and excluded from metadata. Select Claude Opus, Sonnet, or Haiku from the AI Connection record. Configure system prompt defaults without code changes.)

Long-Context Use Cases, Unique to Salesforce + Claude

Account 360 With Complete History

Generate Account intelligence that references specific Opportunities by name, quotes actual Case descriptions, and cites Contact engagement patterns. Claude analyzes 3 years of CRM data in a single prompt.

Case Resolution Intelligence

(Send the full Case thread (email-to-case messages, internal comments, related Cases) to Claude for root cause analysis. Trigger this from Salesforce Flow without writing Apex.)

Why Choose Anthropic Claude

200K Tokens Per Request

Send complete Account histories, full Case threads, and multi-year Opportunity timelines without truncation. Claude processes the entire Salesforce record graph in a single prompt.

PII Masking on Large Payloads

GPTfy's Security Layer scans and masks PII across the full 200K token payload. The larger your data context, the more essential automated masking becomes for compliance.

Cross-Reference Intelligence

Claude identifies correlations across related objects that truncated models miss: Case escalation patterns tied to renewal risk, Contact engagement gaps aligned with Opportunity stalls.

Powerful Capabilities

200K Token Context Window

Send complete Account histories, multi-year Case threads, and full Opportunity timelines to Claude in a single request. No silent truncation of older records.

Full-Payload PII Masking

GPTfy's Security Layer masks PII across the entire 200K token payload before any data reaches Anthropic. Names, SSNs, emails, and custom patterns are detected and replaced in Apex.

Cross-Object Intelligence

Claude sees correlations across Opportunities, Cases, Contacts, and Activities that truncated models miss. Pattern detection spans the full record history.

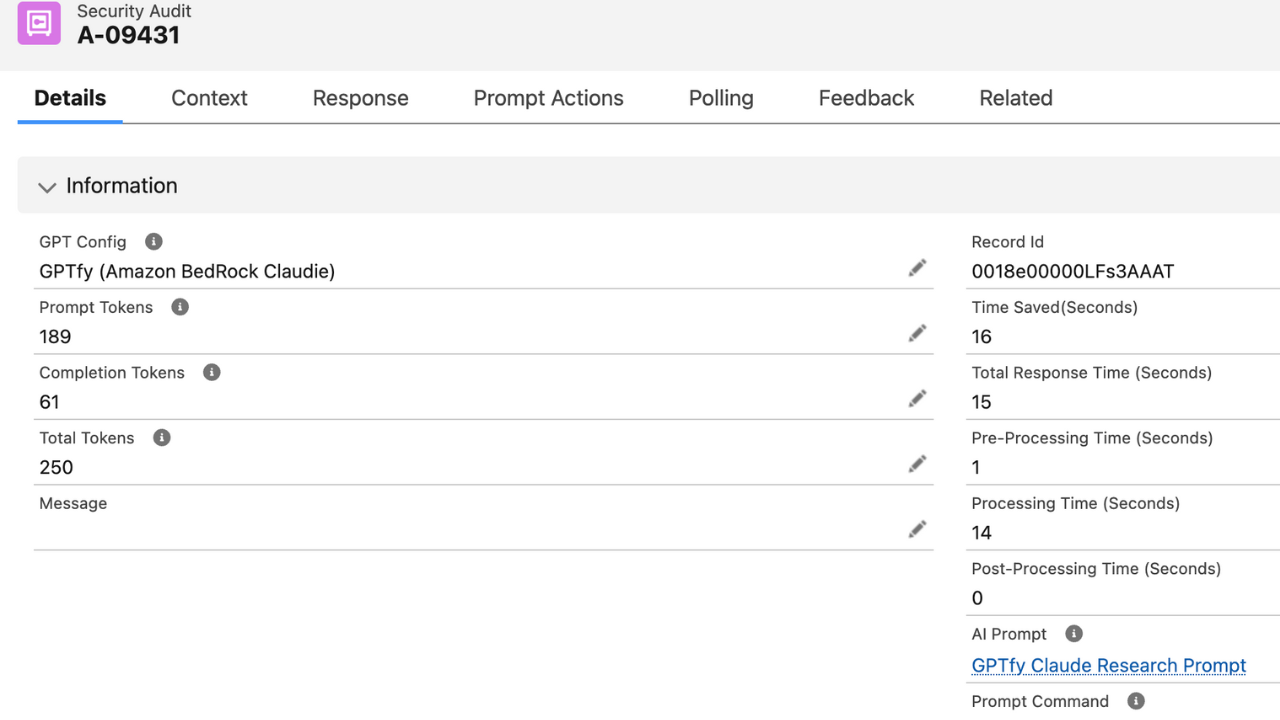

AI Response Audit Trail

Every Claude API call creates an AI Response record in Salesforce: the masked prompt sent, the response received, token count, cost, and timestamp for compliance audit.

Key Takeaways

- Claude's 200K token context window lets GPTfy send complete Account histories; every Opportunity, Case, and Contact, without truncation.

- GPTfy's Security Layer masks PII across the full 200K token payload in Apex before any data reaches Anthropic.

- Anthropic API key is stored in a Salesforce Named Credential; encrypted at rest and excluded from metadata deployments.

- Supports Claude Opus 4.6, Sonnet 4.6, and Haiku; select the model per AI Connection record without code changes.

- Claude identifies cross-object correlations. Case escalations tied to renewal risk, Opportunity stalls matching support incidents.

- Every Claude request creates an AI_Response__c record with masked prompt, response, token count, and timestamp.

Frequently Asked Questions

Salesforce Accounts accumulate years of related records: Opportunities, Cases, Contacts, Activities, Emails. A typical enterprise Account can generate 50K-150K tokens of context. Models with 4K-32K context windows silently truncate this data, dropping older records. Claude's 200K window lets GPTfy send the complete record without any data loss, which is critical for accurate Account 360 analysis.

GPTfy's Security Layer uses configurable regex patterns to scan the entire payload in Apex before the API callout. Whether the payload is 5K or 200K tokens, every name, SSN, email, phone number, and custom pattern is masked. The original values are stored in memory for re-insertion after the response returns. Raw data stays in Salesforce, and only masked data reaches Anthropic via Named Credentials.

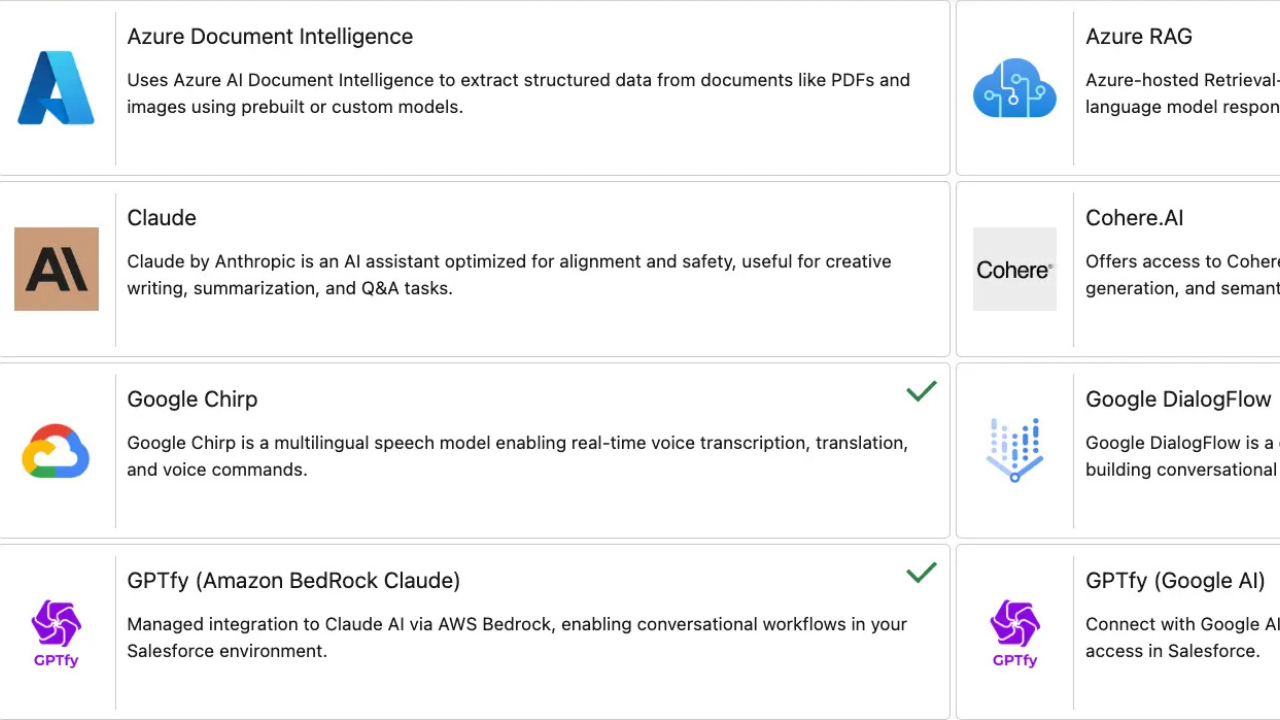

GPTfy supports all Claude models available via the Anthropic Messages API: Claude Opus 4.6, Claude Sonnet 4.6, and Claude Haiku. You select the model on the AI Connection record and can switch models without code changes. Use Haiku for high-volume, low-complexity tasks, Sonnet for balanced performance, and Opus for maximum reasoning depth.

Native platform trust layers use shorter context windows and do not support direct Anthropic model selection. GPTfy sends the full record context to Claude's 200K token window, masks PII with configurable patterns, and logs every request to an AI Response object. No additional data platform licenses or per-conversation credits required.

Account 360 Intelligence (complete history analysis), Case Resolution Briefs (full thread including email-to-case), Opportunity Win Strategy (all related activities and stakeholder interactions), and Renewal Risk Assessment (multi-year trend analysis). Any use case where truncated data leads to incomplete analysis benefits from the 200K context window.

Create an AI Connection record in GPTfy's managed package with your Anthropic API key. GPTfy stores the key in a Salesforce Named Credential, encrypted at rest. Select Claude Opus, Sonnet, or Haiku, then build prompts via the Prompt Builder with no code required. GPTfy handles PII masking, audit logging, and Named Credential authentication automatically.

See Claude Analyze 3 Years of Salesforce Data

30-minute demo. Complete Account 360 analysis, full Case thread root cause, PII masking across 200K tokens.

Explore More Features

OpenAI in Salesforce

GPT-5.2 with function calling for structured outputs.

DeepSeek R1

Chain-of-thought reasoning at 5-10x lower cost.

Gemini in Salesforce

Multi-modal analysis through your GCP project.

Prompt Builder

Configure and manage AI prompts across models.

Security Layer

PII masking, Named Credentials, and audit trails.

Named Credentials Guide

How Named Credentials secure AI model authentication in Salesforce.

GPTfy vs Einstein

Open BYOM architecture vs Einstein Trust Layer — see the side-by-side breakdown.